If you've gone through any "intro to cloud development" presentations, you've seen the argument for cloud architecture where they talk about the needs of infrastructure scaling up and down. Maybe you run a coffee shop bracing for the onslaught of pumpking-spice-everything, or maybe you're just in a 9-5 office where activity dries up after hours. In any event, this scale-up / scale-down traffic shaping is well-known to most technologists at this point.

But, there's another type of activity shaping I'd like to explore. In this case, we're not looking at the aggregate effect of thousands of PSL-crazed customers -- we're going to look at the lifecycle of a single customer.

Consider these two types of customers -- both very different from one another. The first profile is what you'd expect to see for a customer with low aquisition cost and low setup activity -- an application like Meta might have a profile like this, especially when you expect users to keep consuming at a steady rate.

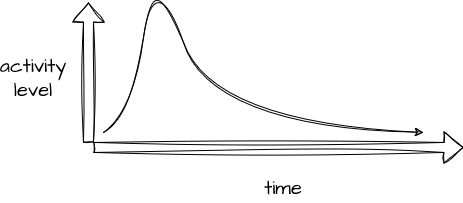

Compare this to a customer with high acquisition cost and high setup activity -- this could be a customer with a long sales lead time or one with a lot of setup work, configuration, training, or the like. Typically, these are customers with a higher per-unit revenue model to support this work. Examples of a profile like this could be sales of high-dollar durable goods, financial instruments like loans, or insurance policies, and so on.

So, What?

Why, exactly, would we care about a profile like this? I believe this sort of model facilitates some important conversations about how a company is allocating software spend relative to customer activity, costs, and revenue, and I also think an understanding of this profile can help us understand the architectural needs of services supporting this lifecycle.

Note that this view of customer activity has some resemblance to a discipline called Customer Lifecyle Management (CLM). Often assoociated with Customer Relationship Mangement (CRM), CLM is typically used to understand activities associated with acquiring and maintaining a customer using five distinct steps: reach, acquisition, conversion, retention and loyalty.

Rather than just working to understand what's happening with your customer across the time axis, consider some alternative views. We can map costs and revenue per customer in a view like this, for example. Until recently, the cost to acquire a customer could be known only on an aggregate basis - computed based on total costs across all customers and allocated as an estimate. But as finops practices improve in fidelity and adoption, information like this could become a reality:

FinOps promises to give us the tools to track the investment in software you've purchased, SaaS tools, and of course, custom tools and workflows you've developed. Unless these tools are extremely mature in your organization, you'll likely need to allocate costs to compute these values. Also note that these costs are typically separated on an income statement - those occurring pre-sales tend to show up as Cost of Goods Sold (GOGS), while those after the sale are operating expenses (OpEx), and if you intend to allocate COGS, these will need to be interpolated, as none of the customers you don't close generate any revenue at all.

Less commonly considered is the architectural connection to a view like this. You might see a pattern like this if you're writing an insurance policy and then billing for it periodically:

Not only do these periods reflect different software needs, they also reflect different oppportunities to learn about your customer. Writing a new insurance policy is critically-important to an insurance company -- careful consideration is made to be sure the risk of the policy is worth the premium to be collected. For that reason, an insurance company will invest a lot of time and energy in this process, and much will be learned about the customer here:

On the other hand, the periodic billing cycle should be relatively uneventful for customer and carrier alike, and less useful information is found here -- at least when the process goes smoothly.

I believe the high-activity / high-learning area also suggests a high-touch approach to architecture. Specifically, I think this area is likely where highly-tailored software is appropropriate for most enterprises, and it also likely yeilds opportunities for data capture and process improvement. Pay attention to data gathered here and take care not to discard it if possible. Considering these activity profiles temporally may also lead us to identify separation among services, so in this case, the origination / underwriting service(s) are very likely different from the services we'd use after that customer is onboarded:

What's next?

I'm early in exploring this idea, but I expect to use this as one signal to influence architectural decisions. I expect that an acvity-over-time view of customers will likely help focus conversations about technologies, custom vs. purchased software, types of services and interfaces, and so on.

At a minimum, I think this idea is an important part of modeling services to match activity levels, as not all customers hit the same peaks at the same time.