I initially learned about CapEx and OpEx about five years ago in the sense that I knew they were accounting terms. It's been an ongoing journey for me to learn why they keep coming up in software development. These terms popped up on my radar again recently in a conversation on the excellent ProKanban community slack channel1.

The conversation prompt was an open-ended "what's up with these", and hopped in with some thoughts, summarizing my understanding accrued over the years.

Basic Definitions

First, the easy stuff. These accounting terms do have some good defintions2, and that's a great place to start. Capital Expenditures (note "expenditure" vs. "expense") are considered long-term purchases or investments, wheres Operating Expenses are short-term expenses. The key bits in these definitions are the association of CapEx to an asset account vs. the association of OpEx as an expense.

Although in software development, most of us are used to these terms originating from what we perceive to be the same activity (developing the software), when that time is accounted for, the activity is treated very differently. Let's start with CapEx.

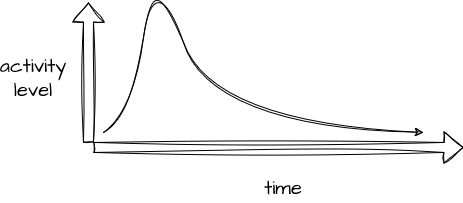

It's important to understand that the accounting definition of CapEx predates its use in tracking software development expenses (also true of OpEx). The original intent or application of CapEx was to account for the purchase of an expensive asset (truck, building, machine) which would then be depreciated over time to reflect its diminishing worth. For software purchased as a one-time license fee, this analogy makes some sense, and starting in the mid 80's, this model was extended to software built in-house. In this scenario, the asset is accrued, or built up in the period prior to "go-live" and then depreciated afterwards. I have several issues with how this relates to modern development practices, but we'll come back to those. In accounting terms, there is an asset that grows as the software is built, and it begins to be tracked as an expense after that go-live event.

In the case of OpEx, the expense and the accounting are considered short-term outlays, tracked against current-period performance. This is a simpler accounting concept, probably more aligned with our experience in development -- we spend time doing a task, and that time is paid for and shows up as an expense in that period. Note that even in the case where CapEx is used to account for the development of a software asset, there will still be expenses associated with running that software, and these costs will be treated as OpEx. A further note on OpEx -- if you've encountered FinOps, note that this field is primarily associated with OpEx.

Accounting deep-dive

For a better understanding of CapEx, I think some examples might be helpful. Forewarned, I'm going to do a T-chart-based deep dive here, so skip ahead if you'd like, but I think if you're in a position where you need to understand why we're tracking CapEx and OpEx, a basic understanding of these concepts is appropriate. I'm also taking some liberties to combine accounts that are likely tracked separately in the interest of simplicity. Let's start with that truck purchase example - a simple cash purchase of a truck (asset) using cash (asset):

Here, we're exchanging one asset for another in a transaction that affects the balance sheet, but does nothing to an income statement. As the truck ages, depreciation is recorded - this reduces the net asset value of the truck (as you'd expect, given age & mileage) and we also record an expense that will show up on the income statement:

Incidentally, just as software incurs OpEx while it's being used, the truck will, too. Not shown in this example are those additional operating expenses like gas, maintenance, and insurance. Pivoting this example to software, we'd expect to see the Capital represented by the software accumulated as an asset during construction. During this period, the increase in assets represents an increase in equity, but this is likely balanced by the expenses occurring in this same period (payroll, contract payments). Once the software is considered operational, the asset begins to incur depreciation as seen with any other hard asset.

Impact of CapEx

So, what's the point of recording software development in this way? First, this method allows financial accounting statements to reflect the intent of the business when development occurs for a while prior to that software providing any real business value. Consider that OpEx is supposed to represent the cost of goods (or services) sold in the current accounting period. If software is built but not being used yet, it's really not fair to count that expenditure against income generated in that period.

I also believe this method of accounting provides an ability to smooth business activities over time -- both the accumulation of the asset value of the software and the discounting of that asset in terms of depreciation occur over a period of time, and both of these streams of accounting entries occur in a fairly predictable way. In a company that's reporting financial results (and especially one that issues guidance about what's expected), this method helps management communicate transactions that are expected with high reliability that the actual transactions that show up on income statements match those expectations. That's important in any company, but vital in a publicly-traded company where surprised are typically punished.

Less clear to me is whether firms take any liberties capitalizing some software vs. others for purposes of steering asset values as these balance sheet and income statement accounts have an impact on measures commonly attributed to company performance3. My understanding of GAAP suggests that an accounting policy shouldn't really vary from one expenditure or project to the next (such that one project is capitalized and the next isn't), but I'd love to hear from practitioners who could walk through particulars.

Flaws in the model

I promised I'd get to some gripes on CapEx and OpEx. Here they are. The elephant in the room, which will surprise nobody in software, is the time and cognitive load involved in tracking time that's supposed to be capitalized separately from time that's not. Best case, if a team or developer can be considered to be only working on CapEx or OpEx-based projects in a given period, frequently, all the time worked in that period can be considered CapEx or OpEx, which reduces reporting overhead. Worst case, you need to ask developers to understand from one task to the next whether that task is considered CapEx-related or OpEx-related. This sort of reporting bugs me no end, because it really detracts from brainpower being brought to bear on building software.

The other big problem I have with the CapEx model for software is the mental model it suggests. In rare cases, software may have been a static one-time expenditure, and accounting for this like it's a filing cabinet or a cement truck may have made sense. In an agile development world, however -- especially one in which we expect to ship an MVP product and add onto it while we operate it -- this model becomes horribly messy, at best, and downright harmful at worst. I strongly suspect that the consensus among developers aligns with the idea that the juice isn't worth the squeeze in this case, but again, I'd love to hear from accountants who could speak to the financial reporting benefits of CapEx for software.

- ProKanban community website, twitter/x, and slack links. ↩︎

- Some additional good explorations of these terms can be found on Investopedia and Wallstreetprep. ↩︎

- These links are good follow-on reading to learn about key accounting kpis and metrics. Growth in assets can also be seen as impacting a comany's future returns. Finally, this thread on Reddit touches on financial reporting and tax implications of these practices. ↩︎